Claude’s Rapid Growth

A recent prediction from a Silicon Valley investment bank reveals a disruptive transformation in the AI industry: Anthropic, the parent company of Claude, has achieved a staggering 30-fold growth in just 15 months, with annual revenue exceeding $30 billion, quietly surpassing OpenAI. This shift is driven by a fundamental change in enterprise procurement logic—from chasing the strongest model to selecting reliable production tools.

Just a few days ago, I examined an internal forecast chart from a Silicon Valley investment bank. The horizontal axis represents time, while the vertical axis indicates annual recurring revenue (ARR). The chart features two lines: pink for Anthropic and blue for OpenAI. At the beginning of 2025, the blue line was still descending from a high position, while the pink line lay dormant at the bottom, with a significant gap between the two.

However, by April 2026, the pink line quietly crossed above the blue line.

- Anthropic ARR: $30 billion

- OpenAI ARR: $25 billion

If you’re not sensitive to numbers, here’s another perspective: in January 2025, Anthropic’s ARR was only $1 billion. In just 15 months, it achieved a 30-fold growth.

As product managers or business leaders, we must recognize that this is not just a simple growth story; it represents a textbook-level “restructuring of the landscape.” While ChatGPT struggles with how to convert its 900 million free users to paid Plus subscriptions, Claude has quietly reached into the core budgets of Fortune 500 companies.

The Exclusion Method

Many people see this number and immediately think: Claude’s model has improved, leading to increased revenue. This logic isn’t entirely wrong, but it doesn’t explain the speed of growth.

While model capability improvement is a necessary condition, such a leap from $1 billion to $30 billion cannot be explained solely by stronger models. Moreover, Claude was already quite capable in 2024; why did this growth happen specifically in the last 15 months, rather than in the previous two years?

Another explanation might be that OpenAI encountered problems. However, this is also incorrect. OpenAI’s absolute revenue is also growing, with an expected $25 billion this year, up from just over $10 billion a year ago. It hasn’t encountered issues; rather, its relative market share is shrinking. These are two entirely different matters.

So, what has truly happened?

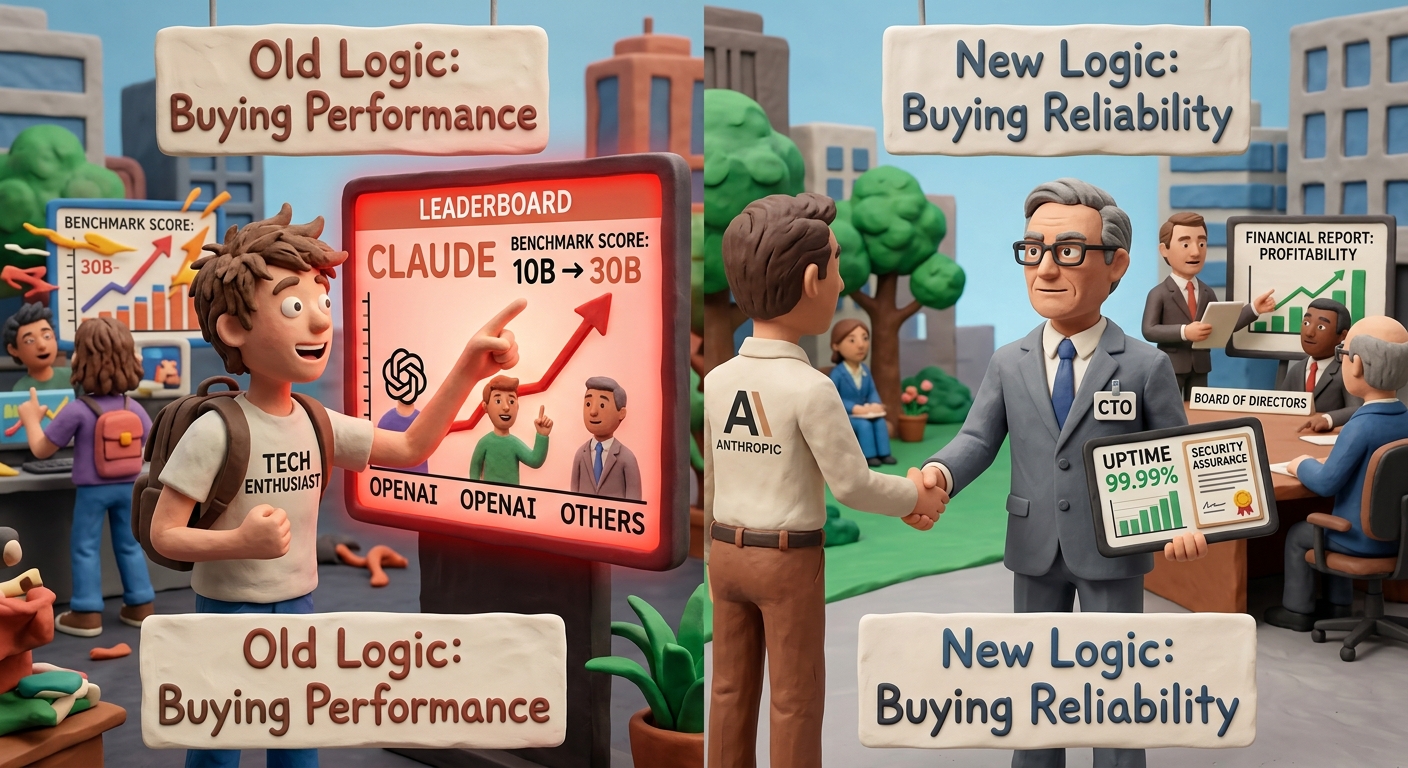

I believe the answer lies in a fundamental shift in the underlying logic of enterprise AI tool procurement over the past six months. The focus has shifted from purchasing the strongest models to acquiring the most reliable production tools.

These two statements may sound similar, but they target completely different buyer psychologies. The former appeals to tech enthusiasts, while the latter resonates with CTOs. The former cares about benchmarks, while the latter is concerned with whether they can explain any issues that arise and provide accountability in the next quarterly report to the board.

Anthropic has effectively captured the latter’s concerns, making a strategic pivot and achieving a remarkable turnaround.

500 to 1000: A More Significant Number Than $30 Billion

In February, when Anthropic announced its Series G funding, it revealed a significant figure: over 500 enterprise clients with annual spending exceeding $1 million. By April, this number had surpassed 1000, doubling in less than two months.

When I first saw this number, my first question wasn’t about the money, but rather: what are these companies purchasing?

Spending over $1 million annually is not just a case of a company trying out a few Claude API keys. It involves embedding Claude’s capabilities into core business processes, such as code review, compliance documentation, customer service, and internal knowledge bases. Once integrated, the cost of switching is extremely high. Replacing it means not just changing a tool, but retraining dozens of employees, reconnecting all APIs, and rerunning acceptance tests. In short, it’s not easy to switch.

This represents real, sticky revenue, not just trial data.

Even more interesting is the speed of this doubling. The rapid increase from 500 to 1000 in less than two months indicates an accelerating procurement decision window in the enterprise sector. Some are racing ahead, while others are following. This isn’t a natural growth pace; it’s a signal that a market consensus is forming, and enterprise AI tool selection is moving from observation to necessity, with Anthropic becoming the default choice.

CoreWeave’s 48 Hours: What Does It Indicate?

On April 9, CoreWeave announced a $21 billion computing power partnership with Meta, effective until 2032. The next day, CoreWeave announced a multi-year computing power agreement with Anthropic. Two significant transactions within 48 hours.

Many people focused on how much CoreWeave’s stock price increased, but I believe the more noteworthy aspect is the simultaneous occurrence of these two transactions.

CoreWeave now covers nine of the top ten AI model providers globally. It doesn’t need to take sides because the demand for production-grade AI inference is so high that no computing power supplier needs to choose between clients. This itself is a signal: the war for AI infrastructure is no longer about “who wins and who loses”; it’s about demand being so great that everyone can benefit.

For Anthropic, this agreement is significant not just for acquiring computing power. With 1000 enterprise clients spending over $1 million annually, these clients have extremely stringent SLA requirements; they cannot accept slower responses during peak times, nor can they tolerate service interruptions that halt business processes. Without a stable infrastructure foundation, having 1000 million-dollar clients is a precarious situation.

In other words, the CoreWeave agreement is Anthropic’s way of transforming enterprise client numbers from mere data into deliverable commitments.

Another detail worth noting: on the same day the CoreWeave agreement was announced, reports surfaced that Anthropic is exploring the possibility of developing its own AI chips. Viewed together, the logic is clear: stabilize production-grade loads in the short term with CoreWeave’s computing power while reducing reliance on external suppliers in the long term through self-developed chips. This is not the behavior of a company still celebrating its funding; it’s the behavior of a company already planning for five years down the line.

73% vs 27%: An Unasked Question

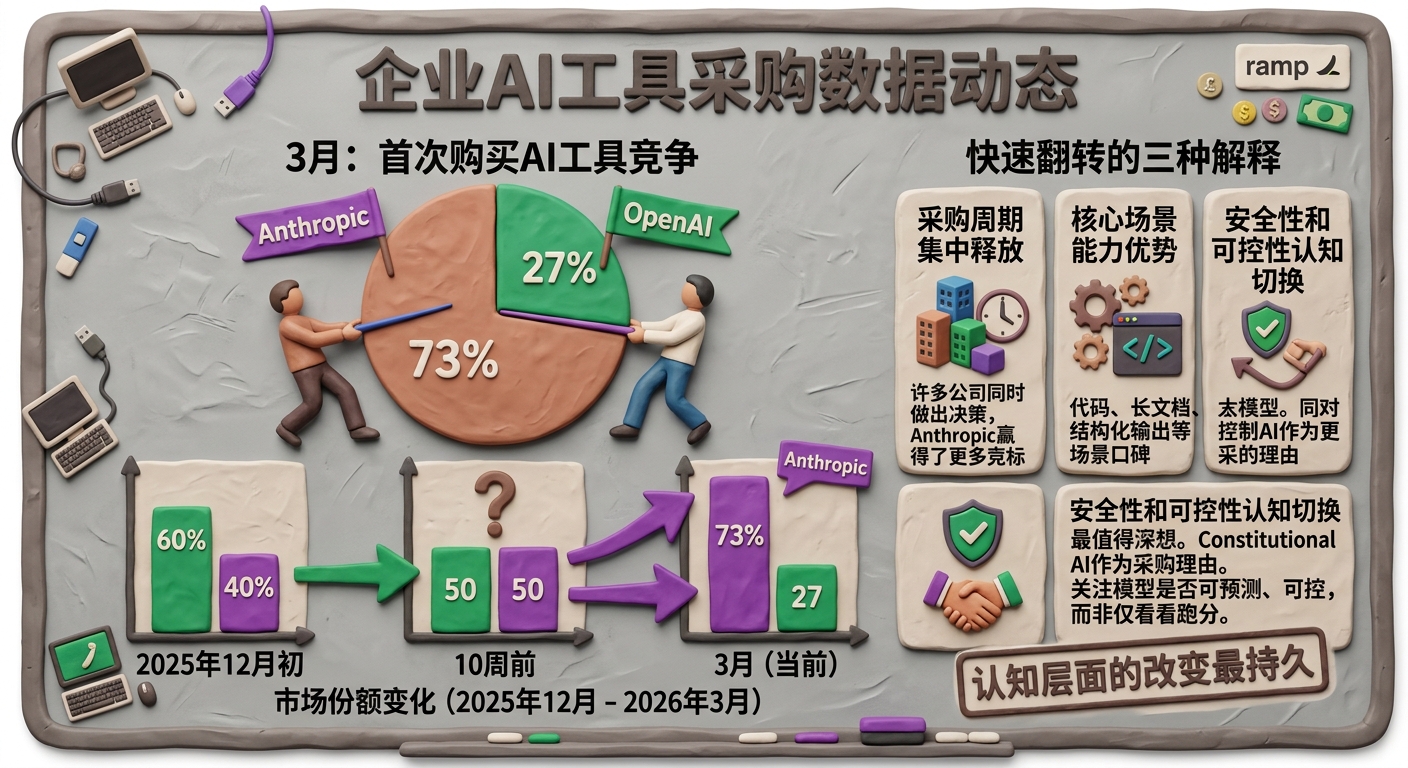

Ramp, an enterprise spending management platform, has tracked extensive procurement data for AI tools. In March, it released a set of figures: among enterprises making their first AI tool purchases, Anthropic won about 73% of head-to-head competitions, while OpenAI secured 27%.

Let’s pause for a moment to reflect on this number: 73% vs 27%.

Now, I want to ask a question that most people haven’t considered: how did this number come about?

Ramp’s data also includes a detail: just ten weeks prior, this ratio was 50/50. Looking back further, in early December 2025, OpenAI held a 60% share.

This indicates that this rapid flip occurred unusually quickly. In my years of working with AI products, I’ve seen many data trends, but witnessing an enterprise market share flip from 50/50 to 73/27 in just ten weeks is unprecedented.

Such rapid shifts typically have three explanations:

-

Concentration of Enterprise Procurement Cycles: Many companies made AI tool selection decisions simultaneously, and Anthropic happened to win more bids during this window. If this is the case, the 73% figure may revert, but Anthropic has already secured a substantial base of sticky clients.

-

Claude’s Core Capability Advantage: Claude has a strong reputation in certain core scenarios like code, long document processing, and structured outputs. If this is the reason, the sustainability of this figure depends on whether OpenAI can catch up in these areas—OpenAI’s product pace has noticeably accelerated recently, so this battle is far from over.

-

Shift in Corporate Perception of Safety and Control: This is, in my opinion, the most likely and thought-provoking explanation.

From day one, Anthropic has communicated that its core message is not about having the strongest model, but rather about being the most predictable and controllable. “Constitutional AI” is not just a technical term; it’s a reason for enterprise procurement decisions. It answers the question: when this AI does something undesirable in my production environment, can I explain why it happened and prevent it from recurring?

For a CTO who must sign off on financial reports, this question is far more critical than how many points a model scores on a benchmark.

If the third explanation holds true, then the sustainability of the 73% figure is the strongest. This is a cognitive shift, not just a functional comparison. Functional capabilities can be chased; cognitive shifts are much harder to reverse.

What Has Anthropic Done Right?

Rather than focusing on model capabilities, let’s discuss product decisions.

One often underestimated aspect is that Claude is currently the only cutting-edge AI model that simultaneously covers AWS Bedrock, Google Cloud Vertex AI, and Microsoft Azure Foundry.

OpenAI operates on Azure, while Gemini is on Google. Claude is available on all three.

This is not just a technical detail; it’s a distribution strategy. Enterprises typically do not purchase AI tools directly from Anthropic’s website; they integrate them through the cloud service providers they already use. If you’re already using AWS, your procurement process, billing, and compliance audits are all within AWS. Therefore, when selecting an AI tool, options that can be used directly on AWS naturally have an advantage over those requiring a separate account—it’s not about better functionality; it’s about lower friction.

Anthropic has positioned itself within the three largest enterprise procurement gateways. This decision compounds benefits every quarter.

Another number that supports this judgment: 80% of Anthropic’s revenue comes from enterprise clients. OpenAI’s revenue structure leans more towards consumers—out of 900 million monthly active users, the majority are free users, and OpenAI is still subsidizing their token consumption.

These are two entirely different business models. The consumer base looks impressive, but it burns cash and has low loyalty; users can easily switch based on a single review article. The enterprise user count is significantly smaller, but they renew contracts, expand their usage, and lock in agreements.

OpenAI is projected to lose $14 billion this year, while Anthropic anticipates achieving positive free cash flow by 2027, three years ahead of OpenAI’s break-even target.

With the same revenue scale, one is burning cash while the other is moving towards profitability. This isn’t a matter of short-term luck; it’s a structural difference in business models.

Back to That Chart

I looked again at the pink line, which crossed above the blue line in April 2026. Then, I asked myself: if I were responsible for AI tool selection at a company right now, what would I be waiting for?

Not for a better opportunity. Not for a stronger model.

What am I waiting for?

If your answer is that you haven’t figured out what to use it for yet, then that is the real issue that needs addressing—not the tools, but your own clarity.

The 1000 enterprises spending over $1 million annually did not wait until they figured it out before they started using it. They figured it out while using it.

This may be the most significant signal behind the 73% figure that deserves serious attention.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.